AMD vs Nvidia at CES 2026: Two contrasting paths in the AI chip race

AMD vs Nvidia at CES 2026: Two contrasting paths in the AI chip race

AMD vs Nvidia at CES 2026: Two contrasting paths in the AI chip race

CES 2026 marked a clear inflection point in how AMD and Nvidia are positioning themselves in the next phase of the AI cycle. AMD used the event to push the idea of AI as a distributed capability, spreading intelligence across PCs, embedded systems and edge environments. Nvidia, by contrast, leaned further into scale, reinforcing its dominance at the very top of the AI stack with tightly integrated supercomputing platforms for hyperscalers.

In markets, the divergence is already reflected in positioning. Nvidia (NVDA) is trading close to the upper end of its 52-week range, hovering between the high-$180s and low-$190s after a powerful 2025 driven by data-centre demand and sustained AI capital expenditure. AMD (AMD), meanwhile, has delivered roughly 70% gains over the past year but continues to trade at a valuation discount, with investors increasingly viewing it as a leveraged AI exposure with room to close the gap if execution improves.

AMD: “AI everywhere” from PC to accelerator

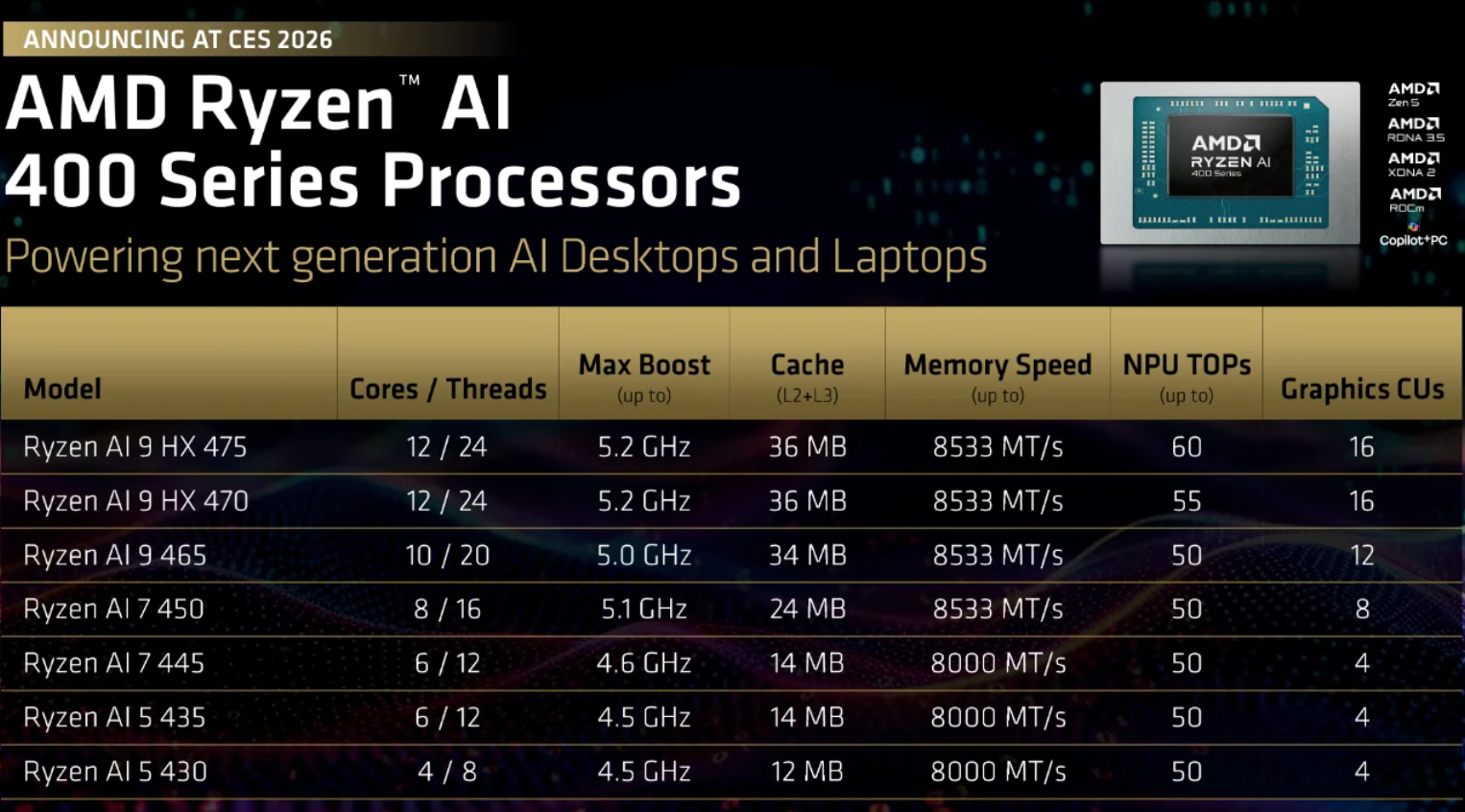

At CES, AMD broadened its Ryzen AI push with the launch of Ryzen AI 400 and AI Max+ processors for laptops, alongside a new Ryzen AI Embedded range built on Zen 5. The strategy is clear: turn the massive global PC install base into a decentralised AI layer, capable of handling inference and real-time workloads closer to the user. Management expects OEM adoption to build steadily through 2026 as AI-enabled PCs become mainstream rather than niche.

In the data centre, AMD continues to flesh out its MI300 and MI455 accelerator roadmap, positioning these chips as more open and cost-competitive alternatives to Nvidia’s offerings for both training and inference. Analysts have flagged the potential for adoption by large AI developers seeking diversification. From a market standpoint, AMD still fits the profile of a share-gain story: smaller scale today, but meaningful upside if software maturity, accelerator wins and PC attach rates start to compound.

Nvidia: doubling down on AI supercomputers

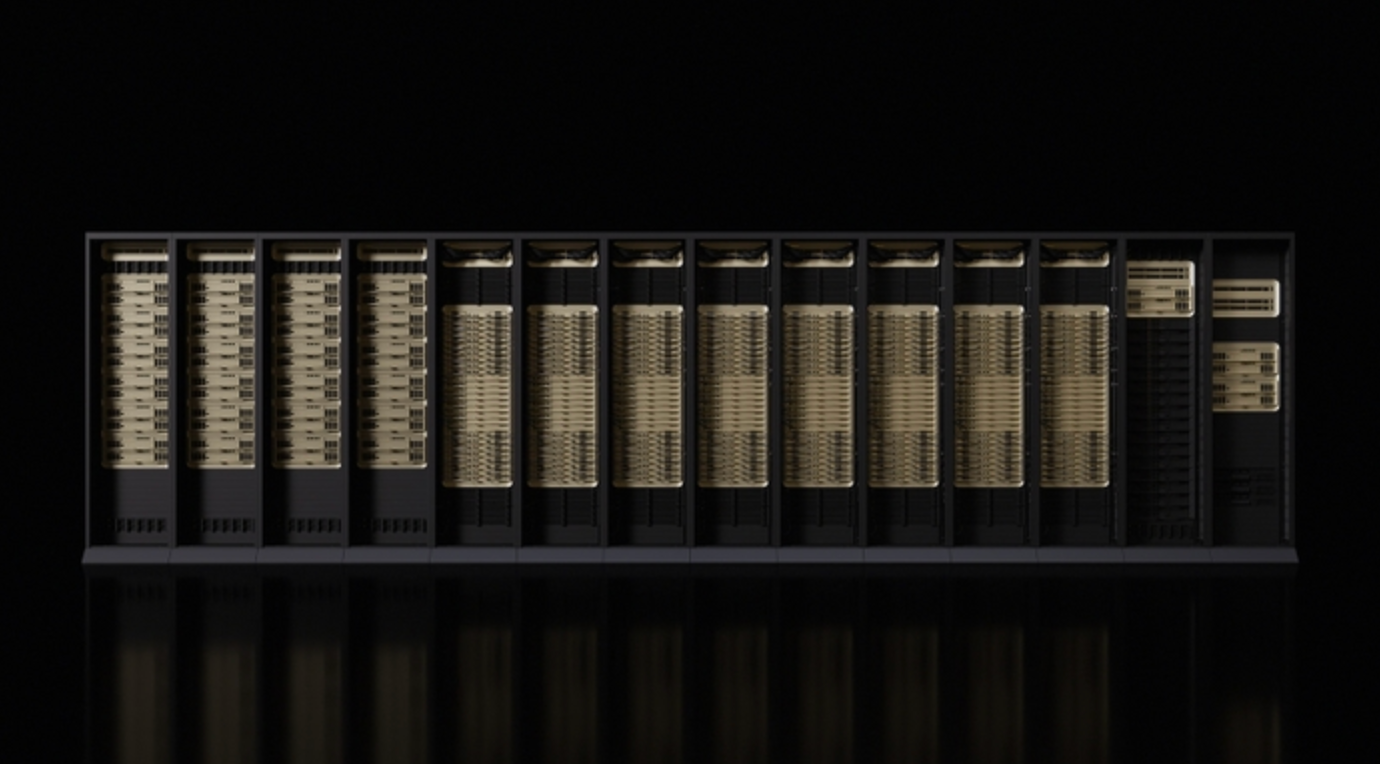

Nvidia’s response came in the form of its Rubin platform - a tightly integrated stack built around new Rubin GPUs, Vera CPUs, and upgraded NVLink 6 and Spectrum-X networking. Rather than selling individual components, Nvidia is reinforcing its position as a provider of end-to-end AI systems designed to operate as unified supercomputers.

Rubin is specifically targeted at so-called “AI factories,” which are built to support advanced models and agentic workloads at scale, with initial deployments expected in the second half of 2026. Crucially, Nvidia has lined up support from all four major hyperscalers - AWS, Azure, Google Cloud and Oracle Cloud - alongside specialist GPU cloud providers. For traders, this keeps NVDA firmly positioned as the benchmark AI stock: expensive by traditional measures, but anchored by long-duration cloud spending. The primary risk remains any visible slowdown in AI budgets or acceleration in the shift toward custom silicon.

Why it matters

CES 2026 highlighted that the AI trade is becoming more complex. The simple equation of AI investment translating directly into GPU upside is no longer sufficient. Attention is shifting toward where workloads are deployed, how efficiently they run, and which vendors can defend margins as inference scales and competition intensifies.

Nvidia’s approach cements its central role in hyperscaler infrastructure, but also increases exposure to pricing pressure as training demand normalises and inference becomes more cost-sensitive. At current valuations, execution matters more than ever. AMD’s path is different. By spreading AI across PCs, industrial systems and embedded use cases, it stands to benefit if adoption broadens beyond large data centres. That makes its story less about dominance and more about steady penetration across a widening range of applications.

The takeaway from CES is that AI is fragmenting into multiple opportunity sets. The next phase will be driven by deployment economics and real-world usage, not just headline compute growth.

Strategic read-through for the AI-chips trade

CES reinforced that neither company is competing on chips alone. Both are delivering platforms that combine silicon, interconnects, software ecosystems and reference designs - with CUDA and ROCm at the centre of their respective strategies.

For investors, the key questions are evolving. Which vendors capture incremental hyperscaler workloads? How much pricing power survives as competition and regulation increase? And how resilient is AI spending if macro conditions tighten? Within this framework, Nvidia remains the core exposure to large-scale AI infrastructure, while AMD offers higher-beta potential if its “AI everywhere” strategy translates into tangible market share gains over the next 12–24 months.

Key takeaway

CES 2026 underscored a clear strategic split. Nvidia continues to represent the most direct, system-level exposure to hyperscaler AI investment, but with growing sensitivity to inference economics, competition and macro conditions. AMD offers a more distributed growth story, with greater upside - and greater execution risk - tied to AI adoption across PCs, edge, and alternative accelerator platforms.

As a result, the AI-chip trade is shifting away from broad momentum and toward selectivity, where efficiency, platform lock-in and workload mix are becoming just as important as raw performance.

AMD and Nvidia technical outlook

AMD is stabilising after a sharp pullback from the $260 highs, with price consolidating around the $223 region as buying interest cautiously returns. The broader structure remains range-bound, but momentum is improving: the RSI is trending back above its midpoint, suggesting a gradual rebuild in bullish sentiment rather than an aggressive risk-on move.

Key downside levels remain unchanged. The $187 area is critical short-term support, with a break likely to trigger renewed selling pressure, while the $155 zone marks longer-term trend support. On the upside, resistance near $260 continues to limit progress, implying that a sustained increase in demand would be needed to confirm a fresh uptrend.

NVIDIA is also attempting to regain balance following its recent decline. Price has reclaimed the $189 area and is moving back toward the centre of its broader range after bouncing from the $170 support zone. Momentum indicators are turning constructive, with the RSI pushing back above the midline, indicating a strengthening of participation rather than a purely technical rebound.

However, upside remains constrained by resistance around $196 and the more significant $208 level, where previous advances have stalled. As long as NVDA holds above $170, the broader structure remains intact, but a decisive break above $196 would be needed to signal a more sustainable bullish continuation.

The performance figures quoted refer to the past, and past performance is not a guarantee of future performance or a reliable guide to future performance.